IBM, Red Hat and Free Software: An old maddog’s view

Copyright 2023 by Jon “maddog” Hall

Licensed under Creative Commons BY-SA-ND

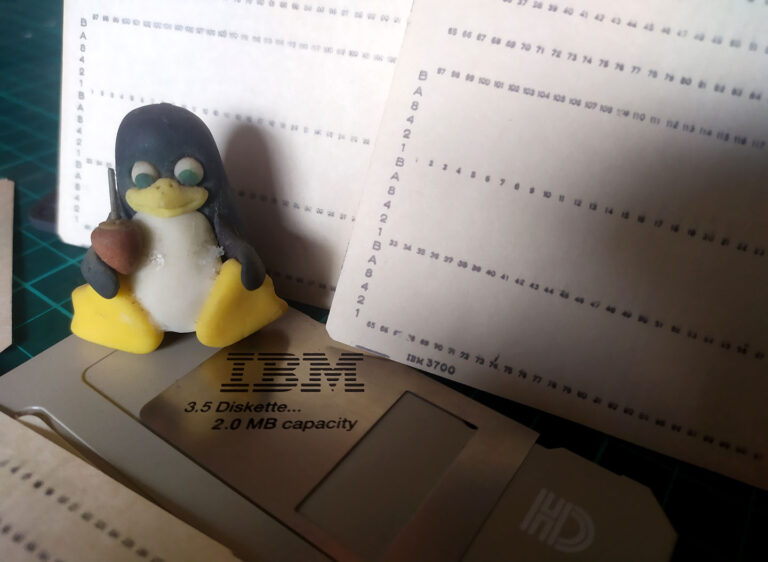

Photo: © Santiago Ferreira Litowtschenko

Several people have opined on the recent announcement of Red Hat to change their terms of sales for their software. Here are some thoughts from someone who has been around a long time and been in the midst of a lot of what occurred, and has been on many sides of the fence.

This is a fairly long article. It goes back a long way. People who know me will realize that I am going to tell a lot of details that will fit sooner or later. Have patience. Or you can jump close to the bottom and read the section “Tying it all together” without knowing all the reasoning.

Ancient history for understanding

I started programming in 1969. I wrote my programs on punched cards and used FORTRAN as a university cooperative education student. I learned programming by reading a book and practicing. That first computer was an IBM 1130 and it was my first exposure to IBM or any computer company.

Back at the university I joined the Digital Equipment User’s Society (DECUS) which had a library of software written by DEC’s customers and distributed for the price of copying (sometimes on paper tape and sometimes on magnetic tape).

There were very few “professional programmers” in those days. In fact I had a professor who taught programming that told me I would NEVER be able to earn a living as a “professional programmer”. If you wrote code in those days you were a physicist, or a chemist , or an electrical engineer, or a university professor and you needed the code to do your work or for research.

Once you had met your own need, you might have contributed the program to DECUS so they could distribute it…because selling software was hard, and that was not what you did for a living.

In fact, not only was selling software hard, but you could not copyright your software nor apply for patents in your software. The way you protected your code was through “Trade Secret” and “Contract Law”. This meant that you either had to create a contract with each and every user or you had to distribute your software in binary form. Distributing your software in binary form back in those days was “difficult” since there were not that many machines of one architecture, and if they did have an operating system (and many did not) there were many operating systems that ran on any given architecture.

Since there were so few computers of any given architecture and if they did have an operating system there were many operating systems for each architecture (the DEC PDP-11 had more than eleven operating systems) therefore many companies distributed their software in source code form or even sent an engineer out to install it, run test suites and prove it was working. Then if the customer received the source code for the software it was often put into escrow in case the supplier went out of business.

I remember negotiating a contract for an efficient COBOL compiler in 1975 where the license fee was 100,000 USD for one copy of the compiler that ran on one IBM mainframe and could be used to do one compile at a time. It took a couple of days for their engineer to get the compiler installed, working and running the acceptance tests. Yes, my company’s lawyers kept the source code tape in escrow.

Many other users/programmers distributed their code in “The Public Domain”, so other users could do anything they wanted with it.

The early 1980s changed all that with strong copyright laws being applied to binaries and source code. This was necessary for the ROMs that were being used in games and (later) the software that was being distributed for Intel-based CP/M and MS DOS systems.

Once the software had copyrights then software developers needed licenses to tell other users what their rights were in usage of that software.

For end users this was the infamous EULA (the “End User License Agreement” that no one reads) and for developers a source code agreement which was issued and signed in a much smaller number.

The origins and rise of Unix™

Unix was started by Bell Labs in 1969. For years it was distributed only inside of Bell Labs and Western Electric, but eventually escaped to some RESEARCH universities such as University of California Berkeley, MIT, Stanford, CMU and others for professors and students to study and “play with”. These universities eventually were granted a campus-wide source code license for an extremely small amount of money, and the code was freely distributed among them.

Unique among these universities was the University of California, Berkeley. Nestled in the tall redwood trees of Berkeley, California with a wonderful climate, close to the laid-back cosmopolitan life of San Francisco, it was one of the universities that Ken Thompson chose to take a magnetic tape of UNIX and use it to teach operating system design to eager young students. Eventually the students and staff, working with Ken, were able to create a version of UNIX that might conceivably be said to be better than the UNIX system from AT&T. BSD Unix had demand paged virtual memory, while AT&T was still a swapping memory model. Eventually BSD Unix had native TCP/IP while AT&T UNIX only had uucp. BSD Unix had a rich set of utilities, while AT&T had stripped down the utility base in the transition to System V from System IV.

This is why many early Unix companies, including Sun Microsystems (with SunOS), DEC (with Ultrix) and HP (with HP/UX) all went with a BSD base to their binary-only products.

Another interesting tidbit of history was John Lions. John was a professor at the University of New South Wales in Australia and he was very interested in what was happening in Bell Labs.

John took sabbatical in 1978 and traveled to Bell Labs. Working along with Ken Thompson, Dennis Ritchie, Doug McIlroy and others he wrote a book on Version 6 of Unix that commented all the source code for the Unix kernel and a commentary on why that code had been chosen and what it did. Unfortunately in 1979 the licensing for Unix changed and John was not able to publish his book for over twenty years.

Unfortunately for AT&T John had made photocopies of drafts of his book and gave those to his students for comments, questions and review. When John’s book was stopped from publication, the students made photocopies of his book, and photocopies of the photocopies, and photocopies of the photocopies of the photocopies, each one becoming slightly lighter and harder to read than the previous generation.

For years Unix programmers measured their “age” in the Unix community by the generation of John’s book which they owned. I am proud to say that I have a third generation of the photocopies.

John’s efforts educated thousands of programmers in how elements of the Unix kernel worked and the thought patterns of Ken and Dennis in developing the system.

[Eventually John’s book was released for publication1, and you may purchase it and read it yourself. If you wish you can run a copy of Version 6 Unix2 on a simulator named SIMH which runs on Linux. You can see what an early version of Unix was like.]

Eventually some commercial companies also obtained source code licenses from AT&T under very expensive and restrictive contract law. This expensive license was also used with small schools that were not considered research universities. I know, since Hartford State Technical College was one of those schools, and I was not able to get Unix for my students in the period of 1977 to 1980. Not only did you have to pay an astronomical amount of money for the license, but you had to tell Bell Labs the serial number of the machine you were going to put the source code on. If that machine broke you had to call up Bell Labs and tell them the serial number of the machine where you were going to move the source code.

Eventually some companies, such as Sun Microsystems, negotiated a redistribution agreement with AT&T Bell Labs to sell binary-only copies of Unix-like systems at a much less restrictive and much less expensive licensing fee than getting the source code from AT&T Bell Labs directly.

Eventually these companies made the redistribution of Unix-like systems their normal way of doing business, since to distribute AT&T derived source code to their customers required that the customer have an AT&T source code license, which was still very expensive and very hard to get.

I should point out that these companies did not just take the AT&T code, re-compile the code and distribute them. They hired many engineers and made a lot of changes to the AT&T code and some of them decided to use code from the University of California Berkeley as the basis of their products, then went on to change the code with their own engineers. Often this not only meant changing items in the kernel, but changing the compilers to fit the architecture and other significant pieces of engineering work.

Then, in the early 1980s Richard M. Stallman (RMS), a student at MIT received a distribution of Unix in binary only form. While MIT had a site-wide license for AT&T source code, the company that made that distribution for their hardware did not sell sources easily and RMS was upset that he could not change the OS to make the changes he needed.

So RMS started the GNU (“GNU is not Unix”) project for the purpose of distributing a freedom operating system that would require people distributing binaries to make sure that the people receiving those binaries would receive the sources and the ability to fix bugs or make the revisions they needed.

RMS did not have a staff of people to help him do this, nor did he have millions of dollars to spend on the hardware and testing staff. So he created a community of people around the GNU project and (later) the Free Software Foundation. We will call this community the GNU community (or “GNU” for short) in the rest of this article.

RMS did come up with an interesting plan, one of creating software that was useful to the people who used it across a wide variety of operating systems.

The first piece of software was emacs, a powerful text editor that worked across operating systems, and as programmers used it they realized the value of using the same sub-commands and keystrokes across all the systems they worked on.

Then GNU worked on a compiler suite, then utilities. All projects were useful to programmers, who in turn made other pieces of code useful to them.

What didn’t GNU work on? An office package. Few programmers spent a lot of time working on office documents.

In the meantime another need was being addressed. Universities who were doing computer research were generating code that needed to be distributed.

MIT and the University of California Berkeley were generating code that they really did not want to sell. Ideally they wanted to give it away so other people could also use it in research. However the software was now copyrighted, so these universities needed a license that told people what they could do with that copyrighted code. More importantly, from the University’s perspective, the license also told the users of the software that there was no guarantee of any usefulness, and they should not expect support, nor could the university be held liable for any damages from the use of the software.

We joked at the time that the licenses did not even guarantee that the systems you put the software on would not catch fire and burst into flames. This is said tongue-in-cheek, but was a real consideration.

These licenses (and more) eventually became known as the “permissive” licenses of Open Source, as they made few demands on the users of the source code of the software known as “developers”. The developers were free to create binary-only distributions and pass on the binaries to the end users without having to make the source code (other than the code they originally received under the license) visible to the end user.

Only the “restrictive” license of the GPL forced the developer to make their changes visible to the end users who received their binaries.

Originally there was a lot of confusion around the different licenses.

Some people thought that the binaries created by the use of the GNU compilers were also covered by the GPL even though the sources that generated the licenses were completely free of any licensing (i.e. created by the user themselves).

Some people thought that you could not sell GPL licensed code. RMS refuted that, but admitted that GPL licensed code typically meant that just selling the code for large amounts of money was “difficult” for many reasons.

However many people did sell the code. Companies such as Walnut Creek (Bob Bruce) and Prime Time Freeware for Unix (Richard Morin) sold compendiums of code organized on CD-ROMs and (later) DVDs for money. While the programs that were on these compendiums were covered by individual “Open Source” licenses, the entire CD or DVD might have had its own copyright and license. Even if it was “legal” to copy the entire ISO and produce your own CDs and DVDs and sell them, probably the creators of the originals might have had harsh thoughts toward the resellers.

During all of this time the system vendors such as Digital Equipment Corporation, HP, Sun and IBM were all creating Unix-like operating systems based on either AT&T System V or part of the Berkeley Software Distribution (in many cases starting with BSD Unix 4.x). Each of these companies hired huge numbers of Unix software engineers, documentation people, quality assurance people, product managers and so forth. They had huge buildings, many lawyers, and sold their distributions for a lot of money. Many were “system companies” delivering the software bundled with their hardware. Some, like Santa Cruz Operations (SCO), created only a software distribution.

Originally these companies produced their own proprietary operating systems and sold them along with the hardware, sensing that the hardware without an operating system was fairly useless, but later they separated the hardware sales from the operating system sales to offer their customers more flexibility with their job mix to solve the customer’s problems.

However this typically meant more cost for both the hardware and the separate operating system. And it was difficult to differentiate from your competitors external to your company and internal to your company. Probably the most famous of these conflicts was DEC’s VMS operating system and various Unix offerings….and even PDP-11 versus VAX.

DEC had well over 500 personnel (mostly engineers and documentation people) in the Digital Unix group along with peripheral engineering and product management to produce Digital Unix.

Roughly speaking, each company was spending on the neighborhood of 1-2 billion USD per year to sell their systems, investing in sophisticated computer science features to show that their Unix-like system was best.

The rise of Microsoft and the death of Unix

In the meantime a software company in Redmond, Washington was producing and selling the same operating systems to run on the PC no matter whether you bought it from HP, IBM, or DEC, and this operating system was now moving up in the world, headed towards the lucrative hardware server market. While there were obviously fewer servers than there were desktop systems, the license price of a server operating system could be in the range of 30,000 USD or more.

The Unix Market was stuck between a rock and a hard place. It was becoming too expensive to keep engineering unique Unix-like systems and competing with not only other Unix-like vendors, but also to fight off Windows NT. Even O’Reilly Publishers, who had for years been producing books about Unix subsystems and commands, was switching over to producing books on Windows NT.

The rise of Linux

Then the Linux kernel project burst on the scene. The kernel project was enabled by six major considerations:

- A large amount of software was available from GNU. MIT, BSD and independent software projects

- A large amount of information about operating system internals was available on the Internet

- High speed Internet was coming into the home, not just industry and academia

- Low cost, powerful processors capable of demand-paged virtual memory were not only available on the market, but were being replaced by more powerful systems, and were therefore available to build a “hobby” kernel.

- A lot of luck and opportunity

- A uniquely stubborn project leader who had a lot of charisma.

Having started in late 1991, by late 1993 “the kernel project” and many distribution creators such as “Soft Landing Systems”, “Yggdrasil”, “Debian”, “Slackware” and “Red Hat” to flourish.

Some of these were started as a “commercial” distribution, with the hope and dream of making money and some were started as a “community project” to benefit “the community”.

At the same time, distributions that were based on the Berkeley Software Distribution were still held up by the long-running “Unix Systems Labs Vs BSDi” lawsuit that was holding up the creation of “BSDlite” that would be used to start the various BSD distributions.

Linux (or GNU/Linux as some called it) started to take off, pushed by the many distributions and the press (including magazines and papers).

Linux was cute penguins

I will admit the following is my own thoughts on the popularity of Linux versus BSD, but from my perspective it was a combination of many factors.

As I said before, at the end of 1993 BSD was still being held up by the lawsuit, but the Linux companies were moving forward, and because of this the BSD companies (of which there were only one or two at the time) had nothing new to say to the press.

Another reason that the Linux distributions moved forward was the difference in the model. The GPL had a dynamic effect on the model of forcing the source code to go out with the binaries. Later on many embedded systems people, or companies that wanted an inexpensive OS for their closed system, might chose software with an MIT or BSD license that license would not force them to ship all their source code to their customers, but the combination of the GPL for the kernel and the large amount of code from the Free Software Foundation caught the imagination of a lot of the press and customers.

People could start a distribution project without asking ANYONE’s permission, and eventually that sparked hundreds of distributions.

The X Window System and Project Athena

I should also mention Project Athena at MIT, which was originally a research project to create a light-weight client-server atmosphere for Unix workstations.

Out of this project came Kerberos, a net-work based authentication system, as well as the X Window System.

At this time Sun Microsystems had successfully made NFS a “standard” in the Unix industry and was trying to advocate for a Display Postscript-based windowing system named “News”.

Other companies were looking for alternatives, and the client-server based X Window System showed promise. However X10.3, one release from Project Athena, needed some more development that eventually led to X11.x and on top of that were Intrinsics and Widgets (Button Boxes, Radio Boxes, Scroll-bars, etc.) that gave the “look and feel” that people see in a modern desktop system.

These needs drove the movement of developing the X Window System out of MIT and Project Athena into the X Consortium, people paid full time to coordinate the development. The X Consortium was funded by memberships from companies and people that felt they had something to get from having X supported. The X Consortium opened in 1993 and closed its doors in 1996.

Some of these same companies decided to go against Unix System Labs, the consortium set up by Sun Microsystems and AT&T, so they formed the Open Software Foundation (OSF) and decided to set a source-code and API standard for Unix systems. Formed in 1988, it merged with X/Open in 1996 to form the Open Group. Today they maintain a series of formal standards and certifications.

There were many other consortia formed. The Common Desktop Environment (I still have lots of SWAG from that) was one of them. And it always seemed with consortia that they would start up, be well funded, then the companies funding them would look around and say “why should I pay for this, all the other companies will pay for it” and those companies would drop out to let the consortium’s funding dry up.

From the few to the many

At this point, dear reader, we have seen how software originally was written by people who needed it, whereas “professional programmers” wrote code for other people and who required funding to make it worthwhile for them. The “problem” with professional programmers is that they expect to earn a living by writing code. They have to buy food, housing and pay taxes. They may or may not even use the code they write in their daily life.

We also saw a time where operating systems, for the most part, were either written by computer companies, to make their systems usable, or by educational bodies as research projects. As Linux matures and as standards make the average “PC” from one vendor become more and more electrically the same, the number of engineers needed to make each distribution of Linux work on a “PC” is minimal.

PCs have typically had difficulty in differentiating one from another, and “price” is more and more one of the mitigating issues. Having to pay for an operating system is something that no company wants to do, and few users expect to pay for it either. So the hardware vendors turn more and more to Linux….an operating system that they do not have to pay any money to put on their platform.

Recently I have been seeing some cracks in the dike. As more and more users of FOSS come on board, they put more and more demands on developers whose numbers are not growing sufficiently fast enough to keep all the software working.

I hear from FOSS developers that too few, and sometimes no, developers are working on blocks of code. Of course this can also happen to closed-source code, but this shortness hits mostly in areas that are not considered “sexy”, such as quality assurance, release engineering, documentation and translations.

Funding the work

In the early days there were just a few people working on projects that had relatively few people using them. They were passionate about their work, and no one got paid.

One of the first times I heard any type of rumblings was when some people had figured out some ways of making money with Linux. One rumble that came up was an indignation that came because the developers did not want people to make money on code they had written and contributed for free.

I understood the feelings of these people, but I advocated the fact that if you did not allow companies to make money from Linux that the movement would go forward slowly, like cold molasses. Allowing companies to make money would cause Linux to go forward quickly. While we lost some of the early developers who did not agree with this, most of the developers that really counted (including Linus) saw the logic in this.

About this time various companies were looking at “Open Source”. Netscape was in battle with other companies who were creating browsers and on the other side there were the web-servers like Apache that were needed to provide servers.

At the same time Netscape decided to “Open Source” their code in an attempt to bring in more developers and lower the costs of producing a world-class browser and server.

The community

All through software history there were “communities” that came about. In the early days the communities revolved around user groups, or groups of people involved in some type of software project, working together for a common goal.

Sometimes these were formed around the systems companies (DECUS, IBM’s SHARE, Sun Microsystems’ Sun-sites, etc) and later bulletin boards, newsgroups, etc.

Over time the “community” expanded to include documentation people, translation people or even people just promoting Free Software and “Open Source” for various reasons.

However, in the later years it turned more and more into people using gratis software and not understanding Freedom Software. The same people who would use pirated software, not giving back at all to the community or the developers.

Shiver me timbers….

One of the other issues of software is the concept of “Software Piracy”, the illegal copying and use of software against its license.

Over the years some people in the “FOSS Community” have downplayed the idea of Intellectual Property and even the existence of copyright, without acknowledging that without copyright they would have no control over their software whatsoever. Software in the public domain has no protection from people taking the software, making changes to it, creating a binary copy and selling it for whatever the customer would pay. However, some of these FOSS people condone software piracy and turn a blind eye to it.

I am not one of those people.

I remember the day I recognized the value of fighting software piracy. I was at a conference in Brazil when I told the audience that they should be using Free Software. They answered back and said:

“Oh, Mr. maddog, ALL of our software is free!”

At that time almost 90% of all desktop software in Brazil was pirated, and so with the ease of obtaining software for gratis, part of the usefulness of Free Software (its low cost) was obliterated.

An organization, the [Business} Software Alliance (BSA), was set up by companies like Oracle, Microsoft, Adobe and others to find and prosecute (typically) companies and government agencies that were using unlicensed or incorrectly licensed software.

If all the people using the Linux kernel would pay just one dollar for each hardware platform where it was running, we would be able to easily fund most FOSS development.

Enter IBM

One person at IBM, by the name of Daniel Frye, became my liaison to IBM. Dan had understood the model and the reasons for having Open Source.

Like many other computer companies (including Microsoft) there were people in IBM who believed in FOSS and were working on projects on their own time.

One of Daniel’s focuses was to find and organize some of these people into a FOSS unit inside of IBM to help move Linux forward.

From time to time I was invited to Austin, Texas to meet with IBM (which, as a DEC employee, felt very strange).

One time I was there and Dan asked me, as President of Linux International(TM), to speak to a meeting of these people in the “Linux group”. I gave my talk and was then issued into a “green room” to wait while the rest of the meeting went on. After a little while I had to go to the restroom, and while looking for it I saw a letter being projected on the screen in front of all these IBM people. It was a letter from Lou Gerstner, then the president of IBM. The letter said, in effect, that in the past IBM had been a closed-source company unless business reasons existed for it being Open Source. In the future, the letter went on, IBM would be an Open Source company unless there were business reasons for being closed source.

This letter sent chills up my back, because working at DEC, I knew how difficult it was to take a piece of code written by DEC engineers and make it “free software”, even if DEC had no plans to sell that code … .no plans to make it available to the public. After going through the process I had DEC engineers tell me “never again”. This statement by Gerstner reversed the process. It was now up to the business people to prove why they could not make it open source.

I know there will be a lot of people out there that will say to me “no way” that Gerstner said that. They will cite examples of IBM not being “Open”. I will tell you that it is one thing for a President and CEO to make a decision like that and another for a large company like IBM to implement it. It takes time and it takes a business plan for a company like IBM to change its business.

It was around this time that IBM made their famous announcement that they were going to invest a billion US dollars into “Linux”. They may have also said “Open Source”, but I have lost track of the timing of that. This announcement caught the world by shock, that such a large and staid computer company would make this statement.

A month or two after this Dan met with me again, looked me right in the eye and asked if the Linux community might consider IBM trying to “take over Linux”, could they accept the “dancing elephant” coming into the Linux community, or be afraid that IBM would crush Linux.

I told Dan that I was sure the “people that counted” in the Linux community would see IBM as a partner.

Shortly after that I was aware of IBM hiring Linux developers so they could work full time on various parts of Linux, not just part time as before. I knew people who were working as disparate parts of “Linux” as the Apache Web Server that were paid by IBM.

About a year later IBM made another statement. They had recovered that billion dollars of investment, and were going to invest another billion dollars.

I was at a Linux event in New York City when I heard of IBM selling their laptop and desktop division to Lenovo. I knew that while that division was still profitable, it was not profitable to the extent that it could support IBM. So IBM sold off that division, purchased Price Waterhouse Cooper (doubling the size of their integration department) and shifted their efforts into creating business solutions, which WERE more profitable.

There was one more, more subtle issue. Before that announcement, literally one day before the announcement, if an IBM salesman had used anything other than IBM hardware to create a solution, there might have been hell to pay. However at that Linux event it was announced that IBM was giving away two Apple laptops as prizes in a contest. The implications of that prize giveaway was not lost on me. Two days before that announcement, if IBM marketing people had offered a prize of a non-IBM product, they probably would have been FIRED.

In the future a business solution by IBM might use ANY hardware and ANY software, not just IBM’s. This was amazing. And it showed that IBM was supporting Open Source, because Open Source allowed their solution providers to create better solutions at a lower cost. It is as simple as that.

Lenovo, with its lower overhead and focused business, could easily make a reasonable profit off those low-end systems, particularly when IBM might be a really good customer of theirs.

IBM was no longer a “computer company”. They were a business solutions company.

Later on IBM sold off their small server division to Lenovo, for much the same reason.

So when IBM wanted to be able to provide an Open Source solution for their enterprise solutions, which distribution were they going to purchase? Red Hat.

And then there was SCO

I mentioned “SCO” earlier as a distribution of Unix that was much like Microsoft. SCO created distributions, mostly based on AT&T code (instead of Berkeley) and even took over the distribution of Xenix from Microsoft when Microsoft did not want to distribute it anymore.

The was Santa Cruz Operations, located in the Santa Cruz mountains overlooking the beautiful Monterey Bay.

Started by a father/son team Larry and Doug Michels, they had a great group of developers and probably distributed more licenses for Unix than any other vendor. They specialized in server systems that drove lots of hotels, restaurants, etc. using character-cell terminals and later X-terms and such.

Doug, in particular, is a great guy. It was Doug, when he was on the Board of Directors for Uniforum, who INSISTED that Linus be given a “Lifetime Achievement” award at the tender age of 27.

I worked with Doug on several projects, including the Common Desktop Environment (CDE) and enjoyed working with his employees.

Later Doug and Larry sold off SCO to the Caldera Group, creators of Caldera Linux. Based in Utah the Candera crew were a spin-off from Novell. From what I could see, Caldera was not so much interested in “FreeDOM” Linux as having a “cheap Unix” free of AT&T royalties, but still using AT&T code. They continually pursued deals with closed-source software that they could bind into their Linux distribution to give value.

This purchase formed the basis of what became known as “Bad SCO” (when Caldera changed their name to “SCO”), and who soon took a business tactic of suing Linux vendors because “SCO” said that Linux had AT&T source code in it and was a violation of their licencing terms.

This caused a massive uproar in the Linux Marketplace, with people not knowing if Linux would stop being circulated.

Of course most of us in the Linux community knew these challenges were false. One of the claims that SCO made was that they owned the copyrights to the AT&T code. I knew this was false because I read the agreement between AT&T and Novell (DEC was a licensee of both, so they shared the contract with us) and I knew that, at most, Santa Cruz Operations had the right to sub-license and collect royalties….but I will admit the contract was very confusing.

However no one knew who would fund the lawsuit that would shortly occur.

IBM bellied up to the bar (as did Novell, Red Hat and several others), and for the next several years the legal battle went on with SCO bringing charges to court and the “good guys” knocking them down. You can read more about this on Wikipedia.

In the end the courts found that at most SCO had an issue with IBM itself over a defunct contract, and Linux was in the clear.

But without IBM, the Linux community might have been in trouble. And “Big Blue” being in the battle gave a lot of vendors and users of Linux the confidence that things would turn out all right.

Red Hat and RHEL

Now we get down to Red Hat and its path.

I first knew Red Hat about the time that Bob Young realized that the most CDs his company ACC corps were from this little company in Raleigh, North Carolina.

Bob traveled there and found three developers who were great technically but were not the strongest in business and marketing.

Bob bought into the company and helped develop the policies of the company. He advocated for larger servers, more Internet connectivity, in order to give away more copies of Red Hat. It was Bob who pointed out that “Linux is catsup, and I will make Red Hat™ the same as “Heinz™”.

Red Hat developed the business model of selling services, and became profitable doing that. Eventually Red Hat went out with one of the most profitable IPOs of that time.

Red Hat went through a series of Presidents, each one having the skills needed at the time until eventually the need of IBM matched the desires of the Red Hat stockholders.

It is no secret that Red Hat did not care about the desktop other than as a development platform for RHEL. They gave up their desktop development to Fedora. Red Hat cared about the enterprise, the companies that were willing to pay hefty price tags for the support that Red Hat was going to sell them with the assurance that the customers would have the source code in case they needed it.

These enterprise companies are serious about their need for computers, but do not want to make the investment in employees to give them the level of support they need. So they pay Red Hat. But most of those companies have Apple or Microsoft on the desktop and could care less about having Fedora there. They want RHEL to be solid, and to have that phone ready, and they are willing to pay for it.

The alternatives are to buy a closed-source solution, and do battle to get the source code when you need it or deal (on a server basis) a solution that is not a hardware/software system solution needed by IBM.

“Full Stack” systems companies versus others

A few years ago Oracle made a decision to buy the Intellectual Property of Sun Microsystems. Of course Oracle had its products work on many different operating systems, but Oracle realized that if they had complete control of the hardware, the operating system and the application base (in this case their premier Oracle database engine) they would create “Unstoppable Oracle”.

Why is a full-stack, systems company preferred? You can make changes and fixes to the full-stack that benefits your applications and not have to convince/cajole/argue with people to get it in. Likewise you can test the full stack for inefficiencies or weak points.

I have worked for “full-stack” companies. We supported our own hardware. The device drivers we wrote had diagnostics that the operating system could make visible to the systems administrators to tell them that devices were ABOUT to fail, and to allow those devices to be swapped out. We built features into the system that benefited our database products and our networking products. Things could be made more seamless.

IBM is a full-stack company. Apple is a full-stack company. Their products tend to be more expensive, but many serious people pay more for them.

Why would companies pay to use RHEL?

Certain companies (those we call “enterprises”) are not universities or hobbyists. Those companies (and governments) use terms like “mission critical” and “always on”. They typically do not measure their numbers of computers in the tens or hundreds, but thousands….and they need them to work well.

They talk about “Mean Time to Failure” (MTTF) and “Mean Time to Repair” (MTTR) and want to have “Terms of Service Agreements” (TSA) which talk about so many hours of up-time that are guaranteed (99.999% up-time) with penalties if they are not met. And as a rule of thumb computer companies know that for every “9” to the right of the decimal point you need to put in 100 times more work and expense to get there.

And typically in these “Terms of Service” you also talk about how many “Points of Contact” you have between the customer and the service provider. The fewer the “Points of Contact” the less your contract costs because the customer supplied “point of contact” will have more knowledge about the system and the problem than your average user.

Also on these contracts the customer does not call into what we in the industry call “first line support”. The customer has already applied all the patches, rebooted the system, and made sure the mouse is plugged in. So the customer calls a special number and gets the second or third line of support.

In other words, serious people. Really serious people. And those really serious people are ready to spend really serious money to get it.

I have worked both for those companies that want to buy those services and those companies that needed to provide those services.

Many people will understand that the greater the number of systems that you have under contract the more issues you will have. Likewise the greater number of systems you have under contract the lower the cost of providing service per system if spread evenly across all those customers and systems who need that enterprise support.

IBM has typically been one of those companies that provided really serious support.

Tying it all together

IBM still had many operating systems and solutions that they used in their business solutions business, but IBM needed a Linux solution that they could use as a full-stack solution, just like Oracle did. Giving IBM the ability to integrate the hardware, operating system and solutions to fit the customer better.

Likewise Red Hat Software, with its RHEL solution, had the reputation and engineering behind it to provide an enterprise solution.

Red Hat had focused on enterprise servers, unlike other well-known distributions, with their community version “Fedora” acting as a trial base for new ideas to be folded into RHEL at a later time. However RHEL was the Red Hat business focus.

It should also be pointed out that some pieces of software came only from Red Hat. There were few “community people” who worked on some pieces of the distribution called “RHEL”. So while many of the pieces were copyrighted then released under some version of the GPL, many contributions that made up RHEL came only from Red Hat.

Red Hat also had a good reputation in the Linux community, releasing all of their source code to the larger community and charging for support.

However, over time some customers developed a pattern of purchasing a small number of RHEL systems, then using the “bug-for-bug” compatible version of Red Hat from some other distribution. This, of course, saved the customer money, however it also reduced the amount of revenue that Red Hat received for the same amount of work. This forced Red Hat to charge more for each license they sold, or lay off Red Hat employees, or not do projects they might have otherwise funded.

So recently Red Hat/IBM made a business decision to limit their customers to those who would buy a license from them for every single system that would run RHEL and only distribute their source-code and the information necessary on how to build that distribution to those customers. Therefore the people who receive those binaries would receive the sources so they could fix bugs and extend the operating system as they wished…..this was, and is, the essence of the GPL.

Most, if not all, of the articles I have read have said something along the lines of “IBM/Red Hat seem to be following the GPL..but…but…but...the community!”

Which community? There are plenty of distributions for people who do not need the same level of engineering and support that IBM and Red Hat offer. Red Hat, and IBM, continue to send their changes for GPLed code “upstream” to flow down to all the other distributions. They continue to share ideas with the larger community.

In the early days of the DEC Linux/alpha port I used Red Hat because they were the one distribution who worked along with DEC to put the bits out. Later other distributions followed onto the Alpha from the work that Red Hat had done. Quite frankly, I have never used “RHEL” and have not used Fedora in a long time. Personal preference.

However I now see a lot of people coming out of the woodwork and beating their breasts and saying how they are going to protect the investment of people who want to use RHEL for free.

I have seen developers of various distributions make T-shirts declaring that they are not “Freeloaders”. I do not know who may have called any of the developers of CentOS or Rocky Linux, Alma or any other “clone” of any other distribution a “freeloader”. I have brought out enough distributions in my time to know that doing that is not “gratis”. It takes work.

However I will say that there are many people who use these clones and do not give back to the community in any way, shape or form who I consider to be “freeloaders”, and that would probably be the people who sign a business agreement with IBM/Red Hat and then do not want to live up to that agreement. For these freeloaders there are so many other distributions of Linux that would be “happy” to have them use their distributions.

/*

A personal note here:

As I have stated above, I have been in the “Open Source” community before there was Open Source, before there was the Free Software Foundation, before there was the GNU project.

I am 73 years old, and have spent more than 50 years in “the community”. I have whip marks up and down my back for promoting source code and giving out sources even when I might have been fired or taken to court for it, because the customer needed it. Most of the people who laughed at me for supporting Linux when I worked for the Digital Unix Group are now working for Linux companies. That is ok. I have a thick skin, but the whip marks are still there.

There are so many ways that people can help build this community that have nothing to do with the ability to write code, write documentation or even generate a reasonable bug report.

Simply promoting Free Software to your schools, companies, governments and understanding the community would go a long way. Starting up a Linux Club (lpi.org/clubs) in your school or helping others to Upgrade to Linux (upgradetolinux.com) are ways that Linux users (whether individuals, companies, universities or governments) can contribute to the community.

But many of the freeloaders will not even do that.

*/

So far I have seen four different distributions saying that they will continue the production of “not RHEL”, generating even more distributions for the average user to say “which one should I use”? If they really want to do this, why not just work together to produce one good one? Why not make their own distributions a RHEL competitor? How long will they keep beating their breasts when they find out that they can not make any money at doing it?

SuSE said that they would invest ten million dollars in developing a competitor to RHEL. Fantastic! COMPETE. Create an enterprise competitor to Red Hat with the same business channels, world-wide support team, etc. etc. You will find it is not inexpensive to do that. Ten million may get you started.

My answer to all this? RHEL customers will have to decide what they want to do. I am sure that IBM and Red Hat hope that their customers will see the value of RHEL and the support that Red Hat/IBM and their channel partners provide for it.

The rest of the customers who just want to buy one copy of RHEL and then run a “free” distribution on all their other systems no matter how it is created, well it seems that IBM does not want to do business with them anymore, so they will have to go to other suppliers who have enterprise capable distributions of Linux and who can tolerate that type of customer.

I will also point out that IBM and Red Hat have presented one set of business conditions to their customers, and their customers are free to accept or reject them. Then IBM and Red Hat are free to create another set of business conditions for another set of customers.

I want to make sure people know that I do not have any hate for people and companies who set business conditions as long as they do not violate the licenses they are under. Business is business.

However I will point out that as “evil” as Red Hat and IBM have been portrayed in this business change there is no mention at all of all the companies that support Open Source “Permissive Licenses”, which do not guarantee the sources to their end users, or offer only “Closed Source” Licenses….who do not allow and have never allowed clones to be made….these people and companies do not have any right to throw stones (and you know who you are).

Red Hat and IBM are making their sources available to all those who receive their binaries under contract. That is the GPL.

For all the researchers, students, hobbyists and people with little or no money, there are literally hundreds of distributions that they can choose, and many that run across other interesting architectures that RHEL does not even address.

1https://en.wikipedia.org/wiki/A_Commentary_on_the_UNIX_Operating_System

2https://gunkies.org/wiki/Installing_UNIX_v6_(PDP-11)_on_SIMH

About “Red Hat and IBM are making their sources available to all those who receive their binaries under contract. That is the GPL.” A common misapprehension.

Linux is licensed under GPLv2 only. GPLv2 gives three options for source code transmission: either bundle with the binaries, allow any third party to obtain source code for at least three year for cost of copying, or for non-commercial works only, inform the recipient where the sources can be gotten from.

There are many other licenses applicable to packages, for instance, in RHEL, and thanks to the distros which are willing to untangle them downstream, but if Linux itself does not come with a copy of its source code, it is not sufficient to provide the source code to only the recipient – the source code must be published and provided to anyone asking for it and without further restrictions.

Your understanding is flawed.

The first party in the GPLv2 is the copyright holder.

The second party in the GPLv2 is that party which is choosing to redistribute the software, possibly with modifications.

The third party is the target of the redistribution.

You are mistaking random fourth parties as the third parties.

Thank you for the succinct explanation of the GPLv2 license parties.

No, *your* understanding is flawed. Third parties means *everyone*. https://www.gnu.org/licenses/gpl-faq.en.html#TheGPLSaysModifiedVersions

I believe you misunderstand the GPLv2 license as @kazinator points out below.

In addition, when you talk about “Linux” being licensed as GPLv2, you are probably talking about the Linux kernel, whose copyright holders are legion, some of whom have left the project, some of whom are dead and whose estates are not available. Ergo the kernel stays at GPLv2.

Other projects included in the RHEL release have different copyright holders, and different licenses. As I said in the article, some of the packages may be solely the property of Red Hat, and certainly the compendium of packages can have a separate copyright and license.

100% agreed most level headed and honest look at the hole thing.

Fascinating Read!! Thanks a bunch, Jon 🙂

Thank you for this incredible history lesson. I learned a lot from reading this and people I respect highly speak very highly of you!

Thanks for the historic view. In the end I think IBM is in it for the ownership of the enterprise. Red Hat has been absorbed by IBM and thus are now moving into a mode to make money. They need to if they want to survive. Too bad that they don’t honor the GPLv2 license model. Hope they will be fought in court to see how that license interpretation holds.

I believe that Red Hat is following the GPLv2 license model, and in any case that license typically affects the kernel, but not the rest of the distribution as I have noted in the article and in the comments above.

As far as IBM “in it for the ownership of the enterprise” anyone who has followed IBM’s business models for the past 50 or more years know that is IBM’s target. They are not in the consumer market, the hobbyist market, etc. They, like a lot of other computer companies (Apple, Microsoft, etc.) are also in the educational market.

RHEL has also been in the enterprise market for a long time. “Enterprise” is even in their name. Fedora was a strategy to keep community ties and act as a sandbox for new ideas. Red Hat has been open about that.

Red Hat has been making money, but in business there is also the concept of ROI (return on investment) and if you are not maximizing your revenue with the resources you have, you are not paying fiscal responsibility to your stockholders.

I do not think that IBM or Red Hat will be taken to court over this. Yes, the change in terms will mean that some of their customers will choose a different distribution, but that is doing business….happens all the time. And I hope that the “clone makers” will stop trying to make clones of RHEL and instead (as I said in my paper) make a BETTER RHEL, a BETTER Red Hat.

However, making a BETTER Red Hat is more than just making a better set of bits in an ISO. It is understanding your customer’s business, setting up and training channel partners, setting quantity discount schedules that make sense. Things that Red Hat has been doing for close to thirty years.

Presently, I am working on a long-running project that used Centos as a base. The need was for a stable Linux distribution, and the choice of Centos was pretty much arbitrary. In present would likely choose Debian. The recent nonsense around Centos is a pain.

Not sure about the size of the set:

“[…] some customers developed a pattern of purchasing a small number of RHEL systems, then using the “bug-for-bug” compatible version of Red Hat from some other distribution”.

For those large enterprises that keep limited technical staff, for whom buying support makes sense, does the above model work? Is this truly a significant sized set? (I have no notion, or any notion of how to measure.)

Will admit I am entirely unfamiliar with those sort of enterprises (other than as customers). Never been on that side of the fence. Rarely have sought support from an operating system vendor, and almost never got anything useful.

There is the group that deploys Centos in their datacenter and/or cloud. They need a stable compatible base, but are not needing support, or the friction of managing licenses. (Yeh, I do not really know that world. Did write cloud infrastructure software as a past project.) Guessing this is the largest set, and only occasionally needing the support of paid-licenses.

In my case, for Linux built into advanced military radars, managing dozens of licenses on each for widely deployed systems that might be in use for decades – is friction I do not need. Support calls to Redhat are unlikely.

But then, I guess I am a freeloader. Was a minor early contributor to CVS, Apache Tomcat, and Mortbay Jetty – long ago. Not so much recently. (Did vote for “comp.os.linux”, if that counts.)

And yes, my reading of “forbid” puts Redhat in violation of GPL, aside from everything else.

“In my case, for Linux built into advanced military radars, managing dozens of licenses on each for widely deployed systems that might be in use for decades – is friction I do not need. Support calls to Redhat are unlikely.”

In that case choosing Debian would probably be the right way to go.

I can’t say too much but I worked at Red Hat for a very long time and left last year. There are absolutely massive customers that are buying RHEL licenses and then deploying 100x (or more) as many systems on Centos. Some of it was suspected based on support requests, but the scale came out after the first Centos EOL announcement about 18 months ago.

Hello,

You didn’t speak to it, so I’m not sure you’re aware of a specific complication of the RHEL source code access situation.

There are several community/partners that produce kernel module binaries for RHEL that are now in limbo because of the change in source access.

For example the CentOS kmod SIG use to build binary kernel module rpm packages for both RHEL and CentOS (for example btrfs kmods just to name one in particular)

They can no longer do that work because they can’t meet the source requirements of the GPL without risking their contractual agreement with RH for RHEL updates. Part of that contractual agreement is you can’t share the sources with 3rd parties. But a kmod binary distributor must do that as the generally accepted interpretation is the binary kernel modules are derived works of the kernel.

This also potentially impacts OEM partners who provide kernel binaries. I’ve identified at least one commercial hardware vendor who is providing binary kernel modules of the lastest RHEL update kernel, that has no public source availability. They have increased risk of liability of a GPL violation now because the RHEL kernel source isn’t public. This is a problem. It’s a problem you didn’t speak to.

These efforts are not clones, if anything they are value-add community efforts that RH has condoned and in the case of the CentOS kmod SIG in particular has explicitly blessed. But worse are the potential impacts on OEM partners that work hand in glove with RH to sell RHEL provisioned systems. If they have kmod binaries available for public access(as I said I’ve found at least one vendor who does) RH has opened them up to potential liability by contractually restricting them from providing source code to anyone (like me as a non RHEL user) to obtain the kernel source used to build the kernel module binary. This is a problem?

Is this evil? No, never ascribe to malice what can be explained by incompetence. But it is a problem. RH didn’t do its due diligence to understand the impacts of its decision in establishing the source wall. RH isn’t blameless in how they handled this situation and unfortunately, its ecosystem partners who carry the liability burden in the wake of the decision. That’s not evil, but it is unfriendly.

I have assurances RH legal is looking at this. Assurances I obtained 2 weeks ago, after bringing up the problem a week prior to that. Its a month now basically sitting on my hands, biting my tongue to undo the impact on RH’s on contributor and partner ecosystem. There’s no excuse for a month long delay, this legal review of the impact on binary kernel module builds should have happened before the source wall went up.

I have explained several times the the comments above that the GPL V2 license of the kernel is different from the license of the RHEL distribution. I believe it was not the intent of IBM nor Red Hat to impact the development of the kernel. You should not need Red Hat’s permission to push kernel sources upstream, nor for that matter to push kernel sources or patches downstream to customers who need them. As I have said before, the essence of the GPL was to give end users the ability to fix bugs and modify the binary code that they received. You are simply a third party supplying that expertise to them. As you said, “this is not a “clone”.

It takes time to have lawyers do anything. I recently went through a bout with lawyers over a trademark issue, and one of the premier legal firms in International IP (you might recognize the name if I told you) took some time in understanding the impact of what someone was trying to do.

You could choose to go ahead anyway, and let Red Hat hit you with a “cease and desist” order, which might raise the priority of their legal team. Recognize that IANAL, and this is not legal advice.

My first language is not English, but my guess is you did not want to write: father/con team

Judging what I’ve seen the ‘backlash’, for lack of a better word in my vocabulary, IBM/Redhat is mostly following the GPL as far as I can see*, but I think what people find a bit icky is seeing a community member going from: everything in the open to only source for our customers.

Not to mention all the people who don’t know what these licenses and specifically the GPL does and does not allow.

* although terminating customer contracts for those who distribute GPL-code it seems like an extra restriction on the GPL which it doesn’t allow it ?

Has Red Hat canceled people’s contracts, or simply told them that when the contracts expire Red Hat will not renew them unless the customer agrees to meet these terms? There is a big difference. Likewise I do not think that Red Hat will come after current customers who use Red Hat and clones. Their systems will not stop working. The customers will have the source code for them and can get their support elsewhere if they wish.

If I am wrong, please correct me.

Thank you for the “father/con team” catch, I will see that it is corrected.

“Father/con” is now corrected to “Father/son”.

[SCO] “Started by a father/con team Larry and Doug Michels,”

Probably meant “son”, but maybe just foreshadowing? It made me laugh anyway.

Doug Michels, the son of that team, was anything other than a “con”. I will have that corrected.

Mr. Hall: thank you for this insightful article. I really enjoyed reading about the history and clarifying the reasons for Red Hat’s changes.

I do try to contribute back with our Linux user group (Flux in South Florida). In shove ways it’s easier than when I started, but in other ways the complexity seems overwhelming even with 20 years of experience with Linux.

But really I’m just writing to say thanks for the inspiration over the years. I had the opportunity to meet you once at MetroLink but have appreciated your tireless work for Linux for years as it has given me a career.

“Giving back” to the community is not just about writing code. which anyone can see has become more complex as the needs of the systems have become more complex.

I also want to make sure that I have *never* said that any of the clone makers are “freeloaders”. However there are a lot of users of Free Software that might fit that description, because they never give back to the community in any way.

I used to tell people that just using Free Software was useful to the community, but I will modify that to intelligently using Free Software, understanding that (as RMS used to say) “It is free as in freedom, not free as in free beer”. To this I would add that there are few things that are truly free. The water we drink needs to be kept safe, the sewage has to be treated, the air has be be kept clean. All of this costs money.

So at a minimum people should understand why they are using Free Software and then tell others why they should be using and contributing to Free Software.

It’s an interesting history and reasonable take. I think the primary issue is that IBM is attempting to put the genie back into the bottle in order to preserve their dwindling RHEL client-base, and as a result, may have simply hastened the exodus.

Unfortunately for them, they produced one of two standard bases that many have come to rely on, if only for the sake of compatibility with other similar systems. Anyone who couldn’t afford RHEL used CentOS or something similar because that’s what they were familiar with, and that spread like a wildfire. Now those same organizations or users need to switch, or simply be SOL. It’s the same way Windows became so ubiquitous; turn a blind eye to piracy and reap the market penetration benefits. Become the standard in the workplace, and home users fall in line—and vice versa.

By pulling back the way they did, they’ve left quite a few people with no recourse. Migration is expensive, even to a nominally compatible system. Licensing and switching to RHEL specifically may not be in the budget. Of course people are going to lash out as a result.

That said, I don’t see an easy way out of this. Nothing is free, and IBM/Red Hat need to be compensated for their efforts in the Linux space by _somebody_ or they’ll eventually stop doing it. Like you said, they’ve been a huge ally in the past, and souring them on the experience does us no favors.

It is an unfortunate situation, but there have been others in the past.

I will remind people that at one time Red Hat Software was the “go-to” distribution for High Performance Computing (HPC) systems that used to be called “Beowulf” systems.

I was at a HPC conference when Red Hat announced that they were going to be charging on a per-cpu basis for their support, which almost overnight caused the HPC community to go to other distributions. I did not even have time to contact my friends at Red Hat to say “WTF? What were you thinking?”

Thank you for the nice read and in-depth history !

What disappoints me and seems somehow inevitable in our economic system is that RedHat has to eradicate all his big rivals in this domain.

After centos, the next big one is Debian it seems to me.

It’s telling to me that you describe Debian as being quite like CentOS, as a competitor to RedHat. That’s some fast and loose use of words. CentOS *was* RHEL, with the trademarks removed. There is nothing about RedHat’s behavior that would suggest it would try to “eradicate” its competitors. RedHat’s recent decisions have only challenged its _copiers_, not its competitors, and to the contrary, should stimulate a notable boost to real competitors like Debian (and Suse, and Ubuntu). It’s regrettable that, in order to make an argument such as you make, you distort/hide the real differences and issues.

I see no reason why Debian (or SuSE, or Ubuntu, or several other “big rivals”) will be eradicated by this move of Red Hat any more than they have been eradicated in the past 30 years. In fact Debian started as a distribution the same year that Red Hat did, and Ubuntu, which started as a “clone” of Debian, has moved to its own distribution with its own following.

If anything, Red Hat’s decision might force people to go to these other distributions or follow Alma Linux with their decision to be binary compatible and form real competition in the Linux space.

Jon,

Wow! What a fantastic write up. Thank you for taking the time to write this very informative history. To your point in the very end:

From a business perspective: I whole heartedly agree. It is within the rights of IBM/RHEL to limit or restrict sources to their binary releases only to paying customers. This is the GPL. They are not breaking any rules here.

From an ethical perspective: It does seem quite unethical (at least from my perspective) that IBM/RHEL are consuming open source projects (often as a non-paying consumer), available to the general public, apply their changes and then in turn, limit the availability of those changes to paying customers.

From a development perspective: I would hate to see how much this approach hinders progress and stability of bug/feature isolation/patching. I do not have the numbers in front of me but I would like to think that those who used those free versions of their enterprise distribution often contributed code back, further stabilizing the distribution.

Red Hat has a long [and wonderful] history of creating a standard to which many direct and indirect consumers adhered to. And because of that standard, it was relatively easy for RHEL customers to utilize applications and repositories that were not directly development in RHEL. I’d hate to think of the fragmentation introduced at the results of this.

“From an ethical perspective: It does seem quite unethical (at least from my perspective) that IBM/RHEL are consuming open source projects (often as a non-paying consumer), available to the general public, apply their changes and then in turn, limit the availability of those changes to paying customers.”

This depends on the project, the licensing of that project and the way the project is run. Many projects are delivered across many distributions. Let’s take a simple one like Libre Office. I am not sure if Libre Office is delivered as a part of RHEL, because I have never used RHEL, but if it was and a RHEL customer found a bug and asked Red Hat to fix it, would Red Hat keep that change to itself and keep applying it every time Libre Office released? I think not. If there was a major addition to Libre Office that really benefited RHEL customers perhaps Red Hat might add it as a module in the RHEL release along, but again, I do not think so. The cost of re-implementing and testing it might be more than the perceived value of the change.

On the other hand I extensively wrote in my article that Red Hat and IBM have continuously given back to many projects (kernel, web servers, security patches, etc.) that went to other distributions too. I think if you put on the scales the code contributed by those two companies and their employees they would weigh in quite well to code from other sources.

From what I have seen from Red Hat management on this topic, the “contributed code back” from the clone users did not pay for the business lost by non-payment of service fees.

RHEL is downstream from Fedora. Why not use Fedora? Or use it as an upstream if you really feel like you need to fork RHEL.

Yessir. Or, as I said several times..do not clone RHEL, make a better RHEL.

My understanding of “CentOS Stream” is that it is where the updates to an eventual RHEL stream are placed. Then at a certain time, the stream is taken to build the RHEL release, but the Source RPMs (SRPMS) for RHEL may not be *exactly* what the code had been in the CentOS stream. Therefore the functionality of the CentOS build might not be *exactly* what RHEL was, and the functionality of the binaries for “CentOS” might not be *exactly* the same for RHEL, which is what the CentOS users wanted.

Fedora is probably further off than the CentOS Stream, with functionality that might never make it into RHEL, and RHEL functionality that might never appear in Fedora.

This is extremely well written article. I am former Red Hat employee. When I first join Red Hat I did not understand the reason why anybody would _pay_ for Red Hat Enterprise Linux ? I used to refer to as Red Hat Expensive Linux. But after looking at few of the customer issues that we used to deal with on daily basis I completely change my mind and attitude toward RHEL. Red hat deserved to be paid for the hard work they put in produce enterprise ready product.

It takes lot of money to hire motivated developers, certify operating system on myriad different hardware and software combinations, write documentation , do quality assurance and the most import – provide timely quality technical support.

In 1995 a small group of people and companies formed Linux International, a non-profit whose members thought that GNU/Linux had a future for commercial, enterprise businesses, education and government.

We realized that this meant more than just “code”. We needed trained professionals, we needed good trademark protection, we needed good marketing that showed the value of Free Software was NOT just that it often came as low or no cost.

So we started the Linux Mark Institute, who protected the term “Linux” for everyone to use for legitimate purposes. We formed the Linux Standard Base project, to allow code to work across distributions. We helped form the non-profit Linux Professional Institute (LPI) to help certify Systems Administrators and other Linux Professionals in an open way, and now has over 200,000 certified Linux Professionals in over 180 countries around the world.

Eventually many of these projects were folded into the better funded Linux Foundation, although LPI remains independent to this day.

Of the twelve companies that helped form this organization two were Red Hat and IBM.

Jon is spot on in his article, but it doesnt change the fact I left RedHat long ago and I do not miss it. I have been in the IT field about 40 years and my colleagues always ask me why No RHEL certifications … I said I support ‘Linux’ which is open source for anyone and anyone could support it – you do not require a certification, just proof you can do the job … my colleagues hated me >< lol

You are talking to the Chair of the Board of Directors of the Linux Professional Institute (lpi.org). One of the main things we do is certify Linux Professionals with tests, mostly for system administration people, but more recently for DevOPs and even FreeBSD.

Of course having a certification does not mean you will be a good systems administrator, just like having a college degree in electrical engineering does not mean you are a good electrical engineer.

If you take a good look at what a university does, it is these things:

o develop a curriculum that guides you to get the knowledge you need

o develop courses that meet that curriculum

o teach the courses

o test the students to see if they learned the information

o eventually offer a certification (diploma)

However, I will point out that after you graduate with a four year degree you never are “de-certified”. Your degree is never taken away from you.

That is because universities do not teach you a job. They teach you how to learn on your own.

When you are in grade school there is nothing on the test that does not come out of the teacher’s mouth.

In university the professor says:

o here is the syllabus of the class

o there is the library

o there are your other students

o there are industry people for you to interview

o (and today) there is the internet

They teach you how to learn on your own. Then when the technology changes they expect you to learn the new technology.

In speaking to young people I am often asked “Do I need a university education to be a good programmer”. The answer is “no”.

However, few people have the skills to learn on their own. this is why the university drop-out rate for freshmen is often over 50%

If people can learn on their own, they tend to learn only the things they perceive they need, or that they enjoy learning. Often this is not enough to be effective in life.

I tell young people interested in computers that you need to know both “up” and “down”. Learn the languages and APIs, but also learn the algorithms and the way the machine works. I do not expect people to program in assembly language these days, but they should understand the difference in ISAs and how cache works, etc.

Certifications are not just for the job seeker. They are for the hiring person. In the days when Linux was just starting many employers could barely say the word “linux”.

[As a side note there used to be an audio file in the kernel where Linus Torvalds said “Hello, I am Linus Torvalds and I pronounce “Linux” as “Linux”. It was funnier if you actually heard it.]

For these CIOs, CTOs, and CEOs, having certified people (especially ones that could not have 40 years of Linux experience because Linux was only around for 10 years) was useful.

Probably like you I was hired by Bell Laboratories to be a Unix systems administrator without ever having seen a Unix system, but by that time (1977) I had worked on dozens of different systems from six or seven companies, trained myself in three assembly languages (PDP-8, IBM 360/370 BAL, and PDP-11 MACRO-11) by reading books and practicing and taught operating system design and compiler design at the college level, so Bell Labs “took a chance”.

No one has asked to see my college transcripts for the past 40 years. I have no certifications other than my BS in Commerce and engineering (1873) and my MSCS from RPI in 1977.

However I would not blame someone who wanted to hire me as a Linux systems administrator in a complex network to ask to see a couple of recent certifications since I have not done that work in the past 20 years.

YMMV.

Personally I fall under that 50% dropout with the skills to learn on my own, but I never put two and two together to realize that self-taught people tend to limit what they learn to perceived needs or interests. I appreciate you pointing that out. I’ve always recognized the value of understanding the “full stack” and pursuing knowledge of unrelated or uninteresting things in tech, so I struggled to understand why others didn’t seem to do the same. Tho, on the flip side, do shy away from topics outside of tech, but fully acknowledge as such saying things like “I’m dumb outside of tech” and “Tech is all I know.” I can see now why a self-taught person could be harder to work with and thus a higher risk to hire.

The other thing you open my eyes to was about certificates. Universities teaching one how to learn is a concept that I grew up hearing but, already knowing how to learn, it kinda defeats the purpose of paying to how to it. Especially if one isn’t able to take courses that will expand their knowledge in related career areas to stay engaged and gain any form of value from the money spent. Certificates seem like a better solution to prove my skills, but the amount of people with certified “book knowledge” bothered me. In a time where people seem to treat certificates as achievements, it definitely comes off as a flawed system. Being in the earlier years of life, I never considered the value of a certificate where it was all kinda new.

Although a reply to someone else, I really appreciate your insight and prospective.

Jon, thanks for the write up. A very nice summary and hello Chris.

Wookie, always a pleasure to hear from you.

Jon, the breadth and depth of your experience — not to mention that you can remember and chronologise so much of it — leaves me gobsmacked as always. What a writeup!

Ken, you were one of the people I thought of as I wrote it.

I forgot to ask: Back in the day, Red Hat had a program office that helped get gratis licences to hobbyists and other non-commercial entities wanting to use (the precursor to) RHEL. Is that something they’re supporting as part of this change, or are individuals shut out of they can’t afford a full RHEL licence? And then there’re the IAAS hosting providers who offered RHEL platforms..

Yes you can obtain I believe up to 16 RHEL licenses for free with self support. Aka fresh RHEL, not stale Centos or other downstream clones. Or if you want you can get centos stream, which is essentially the code RHEL is made from. Red Hat greatly expanded the ability to get free RHEL copies from Red Hat in recent times.

I believe this is known as the RHEL Deveoper’s license, has its own T’s and C’s and needs to be updated yearly (but at no cost):

https://developers.redhat.com/articles/faqs-no-cost-red-hat-enterprise-linux#general

You are correct, sir. (Hi Ken! I miss you) I’m a Red Hat Employee of over 23 years.

This would be a magnificent history/narrative, if only I were not quite suspicious of some of what I (I, I said) would characterizes as dubious postulates and axioms.

I am sorry, I have no idea what you are talking about.

Such an exciting read! Thank you for sharing!

Putting recent events in perspective is really useful and makes them sound a lot more reasonable. Thanks Jon!

I approve of this history, having started with punch cards, and transitioned from VMS/CTSS to Unix/CTSS at Berkeley and LBL in the 80’s, continuing at the first Unix-only supercomputer center, at NASA, where my group hosted the site/repository of one of the *BSD’s in one of our racks. Tho I have no experience of RHEL, having gotten further and further from ops since the 90’s.

I’ll be using AGPL in releasing my psychological mirror for switching paradigms by opening oneself to an AI and finding direct/natural vs. commercialized rewards, while making interactively-introspective thinking/modeling oxytocinal. I think it needs to be run locally, so-intimate is the data it gleans, but AGPL would at least force hypothetical server providers to give source for how they tweak the ‘mind’ of the mirror, which is based on labeling ‘Rorschach pairs’ of photos yes/no, forming a projectable graph of personality.

How you license your code and create your business plan is a very important part of every project.